NVIDIA's Strategic Gambit: From Training to Full-Stack AI Dominance 💎

NVIDIA's recent announcements at the start of 2025 signal a seismic shift in the AI industry. The acquisition of Grok, an AI inference-specialized chip startup, for approximately $22 billion, coupled with the unveiling of the 'Alpha Mayo' autonomous driving platform, demonstrates NVIDIA's ambition to vertically integrate the AI stack. This move transitions NVIDIA from being the undisputed leader in AI training to a formidable force in AI inference and real-world applications, directly challenging incumbents like Tesla and reshaping competitive dynamics for startups worldwide.

The Grok Acquisition: Closing the Inference Gap and Raising the Bar ⚡

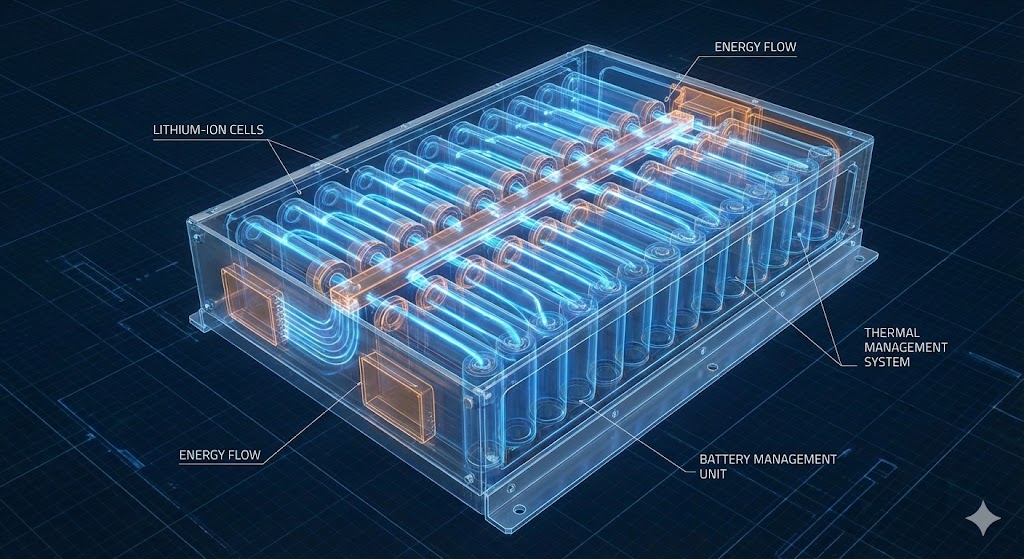

Historically, NVIDIA's strength lay in its GPUs optimized for the computationally intensive training of large AI models. The inference market—running trained models in production—presented opportunities for rivals focusing on efficiency and speed. Startups globally, including several in South Korea, have carved niches in this space.

The Grok acquisition fundamentally alters this landscape. By integrating best-in-class inference technology, NVIDIA is building an end-to-end AI solution powerhouse. Community sentiment on tech forums suggests this creates a significant challenge for smaller players, akin to a local boutique facing competition from a well-funded national chain. The bar for entry and survival is now dramatically higher, pushing startups to prove truly disruptive and defensible technological advantages beyond mere cost-performance ratios.

The Hardware Frontline: Specs, Costs, and Market Control 📊

NVIDIA's advances are backed by concrete metrics. The new Vera Rubin architecture boasts a 5x improvement in AI inference performance and a 3.5x boost in training performance over its predecessor, Blackwell. Perhaps more impactful for commercial adoption is the claim of reducing the cost per token by a factor of ten. This creates a substantial cost advantage for businesses deploying AI services, potentially locking in market share.

🔍 NVIDIA's AI Platform Strategy Analysis

| Platform/Acquisition | Primary Focus | Target Market | Impact on Competition |

|---|---|---|---|

| Grok | AI Inference Optimization | Data Centers, Edge Computing | Consolidates inference market, raises barriers for startups |

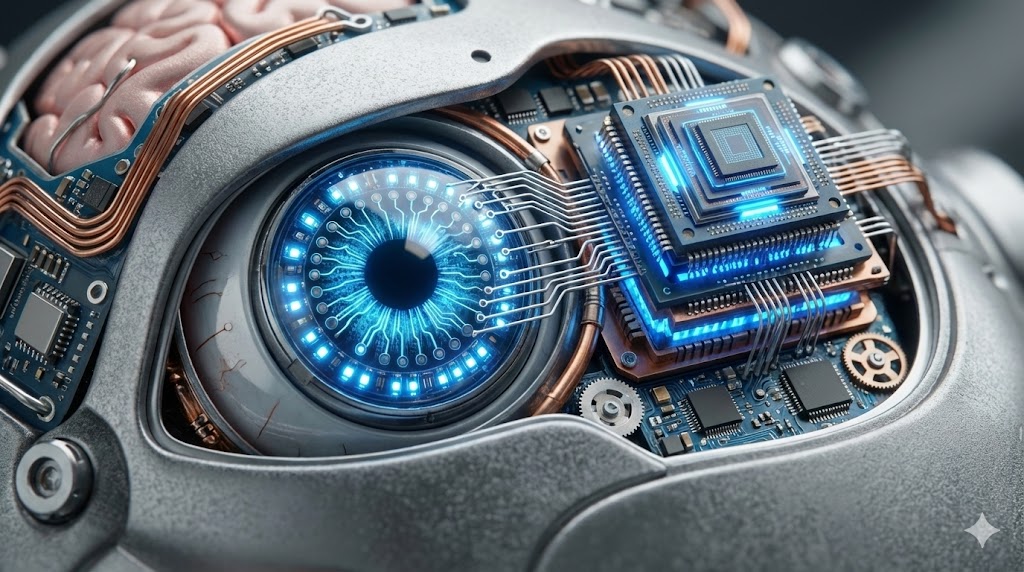

| Alpha Mayo | Autonomous Driving (with Linguistic Reasoning) | Automotive OEMs (e.g., Mercedes-Benz) | Challenges Tesla, expands ecosystem competition |

| Legacy GPUs (H100, B100) | AI Training Optimization | Cloud, Research | Maintains dominant position |

This vertical integration strategy is expected to solidify NVIDIA's grip on over 90% of the AI computing market. Analysis of discussions on platforms like Reddit indicates a prevailing view that NVIDIA's momentum is likely to continue for the next 5-10 years.

Conclusion: Navigating the New AI Paradigm 🎯

NVIDIA's moves with Grok and Alpha Mayo underscore that the AI industry is evolving beyond pure hardware performance wars into a battle of integrated platforms and ecosystems. For competitors and startups, this presents both a crisis and a catalyst. The path forward may involve hyper-specialization in niche markets, deep software-hardware co-design, or developing tailored inference solutions for specific verticals like healthcare or robotics.

In the autonomous driving race, Alpha Mayo's approach of incorporating 'linguistic reasoning' to address the AI black box problem highlights a crucial direction for broader societal adoption of advanced technology. Ultimately, technological progress is measured not just in teraflops, but in trust and explainability.